Quick access

Exclusive Interview - Omina Technologies2 AI Convention 2020 - Digital Days3 News: European Commission and ABI Research4 News: Advantech & Mouser Electronics5 News: International Federation of Robotics & ABB's Analytics Software6 Webinar's Reports7 Report: The AI Powered Enterprise8 Article: AI in Healthcare9 Product in the Spotlight10 AI Platform for Industrial Graphics Analysis Applications11 Holistic AI Package & Intelligent Recording IoT Device12 Factory Intelligence System & Modular Embedded PC13 Whitepapers14 Index15 Contacts16"AI Must be Fair, Explainable, and Unbiased to Deliver Concrete Business Results"

Interview with Rachel Alexander, Founder and CEO at Omina Technologies

Artificial Intelligence

Is there a magic recipe to implement AI efficiently in today's ever-changing business world? According to Rachel Alexander, Founder and CEO at Omina Technologies, companies must be aware of their crucial role in driving artificial intelligence by having a concrete AI strategy that takes into account fairness, explainability, and bias in their data

AI IEN: Could you tell us a few words about you and Omina Technologies?

R. Alexander: I founded Omina Technologies in Belgium in 2016. The idea was to create an AI company for small and big companies, which incorporates the principles of Ethical Artificial Intelligence to make sure that AI is fair, explainable and that it investigates inherent bias in the data. Those were my three main concerns. At that time, the US elections were still ongoing, and people didn't understand the consequences of non-ethical artificial intelligence. At the same time, in 2016 and 2017, I traveled around Europe, giving talks about fair and trustworthy AI. Looking at the market, I noticed that Belgium was quite ready for AI, but something worried me. Mainly big companies were doing AI. That's how it all started.

AI IEN: How has the company evolved during these years?

R. Alexander: Today, there's so much going on around artificial intelligence, and we have grown fast during these four years. In 2016 we were a small start-up, and now we are 20 people just in Belgium and we have employees worldwide.

We started as a research and development company focused on creating ethical, explainable, and fair AI. We soon understood the importance of education in this field and we established a consultancy service called Omina AI Readiness Assessment, helping our clients to identify a competitive AI strategy. For today's businesses, it is fundamental to match their AI strategy with their company's strategy. For example, you will use AI in a particular way to improve operational efficiency. While if you seek to add value to your services and products or even bring an utterly new offering to the market, then different artificial intelligence approaches are required.

We noticed with our consultancy that many companies are getting frustrated now because they feel that AI has not been implemented strategically. This makes it harder to integrate AI into production. It is here that we step in. With our methodology and platform, we can efficiently implement AI and create acceptance within the company. Once you have aligned AI strategically, you want to ensure that it is fair, that you mitigated bias in the data, and that it is explainable. AI makes important decisions and predictions. If you can't explain why then your business won't accept it.

AI IEN: How beneficial is it to be based in Brussels, close to the EU institutions, for your job?

R. Alexander: It is certainly very important. We have been involved with the EU working groups from the beginning. Already at the end of 2016, I was committed to explaining to the Belgian government how important it is to have a trustworthy, ethical, and explainable AI. So we are very well positioned here in Brussels and in Antwerp to affect change in that matter.

Thanks to this dissemination work, companies, governments, and legislators are understanding the problem. The questions are currently getting more practical – "What do we do now?" or "How do we take the next steps to make sure to have a fast return on investments while being ethical?." Answering those questions is our expertise. We want to be pragmatic and talk to the business in business language.

AI IEN: How relevant is the role of small companies in shaping AI?

R. Alexander: The idea that only big companies can do AI is not true. Small and medium-sized companies can leverage its potential as well. We believe in giving AI as an answer to all different businesses. We have built this very powerful platform that enables companies to do artificial intelligence themselves. They don't have to get an army of specialized scientists to get started with AI anymore, but they can work directly with Omina's AI Platform, knowing that it is an inherently fair system that integrates trustworthy artificial intelligence into production.

That's how companies can get into production fast with AI. If they miss any of these steps – whether it is the strategy or mitigating inherent bias in the data, if they don't understand the use case and what they really want, it will be hard for them to drive artificial intelligence as they should do. In the end, all revolves around what companies are trying to achieve from a business perspective.

AI IEN: Do companies have a clearer idea of what they want now?

R. Alexander: The maturity level has improved quite a lot. In 2015 and 2016 companies really didn't understand AI, so I spent most of my time explaining what AI is and what our company can do. I believe there is a lot of understanding of the potential of AI now. At the same time, there is growing frustration within companies. They see this proof of concept springing up within their company without a return on investments.

It is difficult for businesses to understand why they are not getting results given that AI is supposed to be one of the most powerful technologies of the future. That is when they come to us. Thanks to our agile methodology, they can see results very quickly.

AI IEN: Could you tell me more about the platform that you have developed?

R. Alexander: This idea that the business should drive artificial intelligence is the cornerstone of our system. If you look at the different cases and you want to know, for instance, which customers are most likely to leave the company, you need to have clear goals. Do you want to know every single customer potentially at risk or just the most valuable ones? Or maybe you want to understand why your customers might be at risk – and that is the explainability part. There are different ways to go about that. Each business goal requires a different AI solution design, so a different approach. We built this platform to enable the business to put in its constraints and goals directly and get production-ready algorithms very quickly. It provides a sort of "recipe" for quick implementation.

Besides, business people know better than anyone else if there is any bias within the data. Typical cases occur when AI is used to look for the best CEO. Looking purely at historical data would discriminate against women, as there are not that many women CEO. It is essential to be careful when talking about legislation. For instance, there are anti-discriminatory laws around giving loans. The same applies to hiring/firing decisions and compliance with GDPR, to mention other examples. That is why considering the potential inherent bias in your data while including explainability is so crucial if you don't want to get into trouble or have liability issues. AI must be fair, explainable, and unbiased to deliver concrete business results.

AI IEN: How does your platform guarantee ethical AI?

R. Alexander: From a technical perspective, our platform asks businesses if they know about their data's inherent bias. But it could be that they are not aware of them. In this case, we have the ability to scan the data, pick up any bias, and feed that back to the business, highlighting the potential risks and conflict with existing legislation. By specifying various scenarios in the software, companies can pro-actively assess the impact of having different priorities or changing how they want to trade-off competing priorities on the generated AI solution. For example, a scenario where solutions are prioritized based on predictive performance, interpretability and group fairness, where the solution is optimized for group fairness potentially at the expense of lower predictive performance and is optimized for predictive performance potentially at the expense of lower interpretability, means using a different AI solution design than a scenario with the same priorities but where the user wants to optimize the solution for predictive performance potentially at the expense of group fairness. Afterwards it is easier to justify the implemented AI solution to various stakeholders by referring to the alternative prioritization scenarios that have been compared.

Another critical point is that our platform supports a proactive non-discrimination approach. It is not sufficient to react to unfair situations, but it is vital to detect and mitigate the bias by doing proactively specific steps in the AI pipeline to limit vices applying model bias mitigation actively. This methodology gives companies extra objectives, such as investing in predictive performance and optimizing simultaneously for, for instance, gender non-discriminations. A non-discrimination approach should not be an afterthought, but it should be adopted from the beginning and implemented as an AI design constraint.

Sara Ibrahim

A Virtual Skin for 2020 AI Convention Europe

AI Convention Europe was launched in 2018 as a one-day conference targeting international B2B AI professionals. This year, its third edition will take place digitally on October 20-21-22 in the form of a three-day webinar

Artificial Intelligence, Covid-19

Coronavirus may have frozen our social interactions for a while, but it hasn’t prevented people from meeting and discussing important topics, at least digitally. Like many other events, AI Convention Europe, the conference for AI professionals organized by TIMGlobal Media and Mark-Com Event, opted for a digital format. Still, the news this year is that the one-day conference becomes a three-day webinar taking place on the 20th, 21st, and 22nd of October 2020.

Unlike previous years, where numerous speakers took the floor to discuss various issues ranging from law to business, industry, and ethics, this edition will be highly focused on specific topics. The program will consist of three webinars of 90 minutes each, conducted by three top experts in the field of artificial intelligence.

AI for competition, traceability, and business value

The AI webinar series will be open by Salomé Cisnal de Ugarte Managing Partner at Hogan Lovells, with a discussion that focuses on targeted pricing policies made possible by AI and their potential harm and biases.

Indeed, AI has already made possible variable pricing schemes to increase profit based on consumer profiles and contingency. For example, some companies use selective algorithms to determine real-time the price of a flight ticket for the consumer on the basis of profiling data. This translates into higher or lower prices based on the type of device used – if it’s an old PC, you’ll pay less, while for the newest Macintosh, you’ll pay more. Similarly, Uber’s AI technology will raise the price of a ride in emergency situations where the request – and the profitability – is high.

On day 2, Matthieu Hug, CEO & Founder at Tilkal, will discuss the opportunities lying in blockchain for smart traceability. Traceability 4.0 will make traceability information constantly available in real-time. As it happens with the digital thread, the idea of implementing a holistic approach to trace each product at every step of the production process can bring significant operational improvements. The fragmented observation evolves into a continuous analysis of the data, which are available both upstream and downstream.

The event will close its ''screens'' on day 3 with a significant insight on how AI and digital platforms can be critical to the manufacturing industry. Eric Prevost, Vice President Strategy Manufacturing & Industry 4.0 at Oracle France will discuss macro-themes like companies' survival in times of crisis and new business models in the light of embracing new-age technologies such as Cloud, AI, IOT, Blockchain to create new business opportunities and value. Needless to say, the transition to the digital factory is inevitable.

Free online registration open

The webinars are held for free. Every person interested can register on AI Convention website and attend one or all conferences. Don’t forget that IEN Europe is the official media partner of AI Convention Europe. Our readers are warmly invited not to miss this great event!

For free registration, click here!

Sara Ibrahim

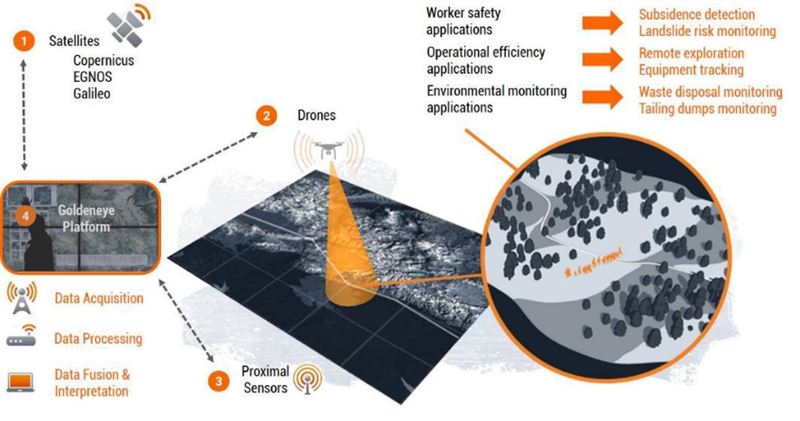

The EU Commission Has Financed an AI Platform for Mines that Uses Earth Observation

The H2020 project was financed with EUR 8.4 million. The objective is to develop an AI platform for the monitoring and analysis of mine sites across Europe using earth observation technologies to improve safety, environmental impact and profitability

Artificial Intelligence, Sensor Technology, Test & Measurement, Vision & Identification

The European Commission has granted EUR 8.4 million to a three-year H2020 project that develops an artificial intelligence platform for the monitoring and analysis of mine sites across Europe. The project, called Goldeneye, will develop solutions that improve safety, environmental impact and profitability of mines by creating a platform that combines earth observation technologies with on-site sensing.

European production and infrastructure depend on a supply of high-quality raw materials. Ensuring that the needed materials are produced responsibly in European mines guarantees sustainable supply and prevents European countries from becoming dependent upon imports from global markets. To support the development of the European mining industry through technological solutions, the Goldeneye platform integrates information from satellite produced Earth Observation Data (EOD), drone overflight sensors and on-site recorded data.

The project is coordinated by VTT Technical Research Centre of Finland and the consortium consist of 16 European companies and research partners. The consortium works together in platform development and in bringing together the work of sensing experts, solution providers, and European mines.

Combining data to minimize risks

The Goldeneye project will implement a unique combination of remote sensing and positioning technologies with data analytics and machine-learning algorithms. The platform will allow satellites, drones and in-situ sensors to collect high-resolution data of the entire mine, which can then be processed and converted into actionable intelligence that allows more efficient exploration, extraction and closure.

“With Goldeneye, we are not solving just one problem. Our goal is to bring together different technologies in a platform that can offer innovative solutions to both businesses and governments and have a positive effect on the mining industry as a whole,” says the project coordinator Marko Paavola from VTT.

The project will also improve the analysis of the mine site mineralogy using drone integrated geophysical sensors as well as innovative proximity sensors including active hyperspectral sensing and time resolved Raman spectroscopy. The platform integrates this information to the data gained from the imagery and radars of available satellites including Copernicus and Sentinel systems as well as global satellites.

Applications for every stage of the lifecycle

The use cases of the Goldeneye platform address the different phases of the mine’s life cycle from exploration to closure and post-closure. The applications developed in the project include mineral detection, safety monitoring, operational management, geo-hazard monitoring, and environmental monitoring. For example, in the safety monitoring application the aim is to improve the safety of the mines by monitoring the mining sites for sudden slope and ground changes as well as analysing the environment of the mines to detect any mining water leakages from their indirect influence to the surrounding nature.

Five pilot sites across Europe

During the project, the Goldeneye platform will be piloted on five mining sites across Europe. Two pilot sites, Pyhäsalmi mine in Finland and Trepča Mines Complex in Kosovo will focus on the study of the environmental impact and stability of the mines. The Pyhäsalmi mine will also be used as a test site for indoor GNSS location positioning and turn-by-turn navigation trials. Accurate location improves the safety of the underground mines as it enables better tracking of the mining activities

Two field trial sites, Erzgebirge district in Germany and Panagyurishte district in Bulgaria, will focus on mineralogical mapping. The geophysical and proximity sensors will be used to calibrate satellite information and teach AI algorithms. The aim is to develop mineral exploration with higher resolution imaging and improved mapping of valuable mineral deposits.

The fifth site in the Roşia Poieni district in Romania aims to improve mineral predictions with combined satellite imagery and drone-produced data. The aim is to improve profitability, and support the mining community of the area.

Furthermore, the project will develop a mineralogical sensor solution for the ore drilling integrating Sandvik drilling machines with time-gated Raman sensors. This will improve the mineral extraction efficiency and support the profitability of the mines.

Consortium partners:

AKG sh.p.k, Beak Consultants, Cuprumin, Dares Technologies, Earth observing system, Galileo Satellite Navigation, OPT/NET, Radai, Sandvik Mining and Construction, Sinergise, Sitemark, Technical University of Cluj Napoca, Timegate Instruments, University of Oulu and University of Sofia

Cloud Computing will Boost Mobile Robotics by 2030

ABI Research forecasts the robot-related services powered by cloud computing will reach US$157.8 billion in annual revenue by 2030

Artificial Intelligence, Automation, Industry 4.0

Though only in its nascent stages, the value of cloud infrastructure to robots is key for both deployment (encompassing development, configuration, and installment) and operation (maintenance, analytics, and control). With the popularization of mobile robotics in a wide range of verticals, it will become necessary to utilize the computing power of cloud infrastructure to store and manage the vast troves of collected data as well as to train more advanced algorithms used to power robot cognition. ABI Research, a global tech market advisory firm, forecasts the robot-related services powered by cloud computing will reach US$157.8 billion in annual revenue by 2030.

“Since 1961, most commercial robots have been wired or tied to external infrastructure for movement. The next generation of robot deployments will be increasingly mobile, tied to cellular and WIFI connectivity, will consume vast troves of data in order to operate autonomously, and will need effective management through real-time measurements for performance, status and operability,” said Rian Whitton, Senior Analyst at ABI Research. Several cloud service providers, including AWS, Microsoft Azure, and Google Cloud, have begun collaborating with robotics developers, while start-ups like InOrbit target cloud-enabled operations for the first major deployment of mobile service robots.

Sophisticated robots

“The journey of the robot industry from one of individual vehicles and units, to fleets and larger systems, is being driven by its wider incorporation into the IoT ecosystem. However, it would be a mistake to suggest robots will simply fit in with devices, individual sensors, and stationary machines as part of the wider IoT ecosystem,” Whitton points out. Robots are increasingly sophisticated systems themselves, with multiple sensors and highly advanced Artificial Intelligence (AI)/Machine Learning (ML) competencies, and are also expected to move around and act within the world, generating huge amounts of data relative to other machines. “To suggest the cloud alone can provide the computing power to operate these machines is naïve, especially during the slow transition to 5G. Onlookers should instead conceive of adaptable edge-cloud systems that focus on quality over quantity when it comes to robotics operation, data processing, and analysis,” Whitton adds.

The cloud robotics opportunity, defined as Robotics-as-a-Service (RaaS) and Software-as-a-Service (SaaS) revenue for robotics operations combined, will grow from US$3.3 billion in 2019 to US$157.8 billion in 2030, accounting for 30% of the robotic industry’s total worth. On its own, this represents a huge opportunity for start-ups, many of which are beginning to expand on their mission to enable developers to accelerate their go-to-market strategy and to help end-users and operators’ access and manage the ever-increasing fleets of robots. This new robotics ecosystem will be dominated by three subcategories of companies, namely robot developers that move up the value chain and become solution providers, third-party IoT and cloud platform providers focused on best-in-class software solutions, and Cloud Service Providers (CSPs) like Microsoft Azure, Amazon Web Services (AWS), and Google Cloud. Those focusing strictly on hardware will lose relative worth and will require partnerships or bold strategies to become solution providers. This can be exemplified by companies like Universal Robots and Fetch Robotics, who have incorporated software and maintenance services into their offering.

Vertical and horizontal innovation

“The market is incredibly nascent at present. ABI Research expects consolidation with the most successful robot solution providers and the CSPs expanding their relative influence on the market to take place within the next decade,” says Whitton. The cloud robotics technology is split between vertical innovations, such as developing superior navigation systems, which increase the possibility of what robots can do, and horizontal innovations that expand access and scalability. “Cloud computing represents the most important horizontal innovation for the robotics industry, to date, and will further enable vertical innovations like swarm-based intelligence, autonomous mobility and advanced manipulation to be deployed at scale,” Whitton concludes.

These findings are from ABI Research’s Commercial and Industrial Robotics application analysis report. This report is part of the company’s Industrial, Collaborative & Commercial Robotics research service, which includes research, data, and ABI Insights. Based on extensive primary interviews, Application Analysis reports present in-depth analysis on key market trends and factors for a specific technology.

''Agility is the Key of IIoT Innovation,'' Advantech Says at Global IIoT Summit

As its first Global IIoT Virtual Summit, Advantech stressed the importance of agile innovation as a key driver for the digital transformation in industrial applications

Artificial Intelligence, Automation, Industry 4.0

On its first Global IIoT Virtual Summit, entitled ‘Connecting Industrial IoT Innovation’, on Tuesday 22nd, Advantech IIoT President, Linda Tsai sent a clear message to more than 2,000 delegates by saying that agile innovation in the area of the Industrial Internet of Things (IIoT) will be the key in driving forward digital transformation in industrial applications into 2021 and beyond.

Ms Tsai explained: “Figures from IDC suggest that by 2025, there will be 41.6 billion IoT devices in use worldwide, generating 79.4 zettabytes of data. Connected devices will pervade every aspect of our personal and business lives, and a complex mix of technology and infrastructure will be more crucial than ever to harnessing the power of the data generated by these devices.

“As a leader in embedded computer systems for industrial applications, Advantech is leading the way in developing more powerful edge computing solutions which are compatible with all types of IIoT devices as well as data centres and cloud providers and can aggregate these vast quantities of data, allowing users to optimise operational effectiveness in their facilities.”

Pioneering through co-creation

Much of Advantech’s pioneering work in this area centres on its strategy of co-creation: collaborating closely with systems integrators and developers to create edge solution-ready platforms (Edge SRP’s) or IApps (Industrial Applications) to make digital transformation as rapid and simple as possible. Advantech can support optimisation in areas from iFactory to industrial equipment manufacturing (IEM), industrial AI, smart automation, transportation, energy & environment and iLogistics.

Ms Tsai went on to identify six key technology trends for 2021: digital transformation, 5G, decoupling, device-to-cloud digitalisation, empowered edge and artificial intelligence (AI).

“According to the GSMA Mobile Economy 2019 Report, 5G will contribute more than US$2 trillion to the global economy up to 2035, of which 35 per cent will go to the manufacturing and utilities sectors. Meanwhile, research from MarketsandMarkets estimates the value of AI in the manufacturing at US$17.2 billion by 2025.

“Advantech has developed an extensive portfolio of AI platforms including edge AI systems, sensors and inference servers, as well as deep learning training servers, to assist customers in exploiting the potential of AI. Meanwhile, in the area of device-to-cloud, we are again at the forefront of innovation, with solutions including private cloud solutions, industrial APP, edge intelligence software and cross-platform middleware – all specifically developed to combining optimised computing with robust and reliable performance in even the most demanding environments.”

The digital transformation as a must

“There is no getting away from the fact that digital transformation will impact every manufacturer in the world in the years to come, and harnessing the power of data will be critical to competitiveness as we move from Industry 4.0 and towards Industry 5.0.

“Our global Summit has brought together partners from across the world to find the best ways to collaborate and exploit the power of new and emerging technologies, to optimise efficiency, performance and commercial success.”

Jash Bansidhar, managing director of Advantech Europe, added: "“The macro strategy of driving Industrial IoT development through the adoption of AI, 5G and edge computing is central to the further adoption and exploitation of IoT technologies. Our mission continues to be to work with ecosystem partners to deliver sustainable success in the post-pandemic era.”

For further information visit www.advantech.eu.

Mouser Electronics Announced Global Agreement with iWave

Thanks to this agreement, Mouser now stocks iWave’s SoMs based on Xilinx, NXP Semiconductors, and Intel® PSG technologies for industrial, automotive, medical, imaging, networking, and artificial intelligence (AI) applications

Artificial Intelligence, Electronics & Electricity, Test & Measurement, Vision & Identification

The global distributor Mouser Electronics announced a global distribution agreement with iWave Systems, enhancing the global reach of iWave’s extensive portfolio of system-on-modules (SoMs). Through the agreement, Mouser now stocks iWave’s SoMs based on Xilinx, NXP Semiconductors, and Intel® PSG technologies for industrial, automotive, medical, imaging, networking, and artificial intelligence (AI) applications.

Innovative System-on-modules portfolio

iWave’s Xilinx ZU19/17/11 Zynq UltraScale+ MPSoC SoMs incorporate Xilinx Zynq UltraScale+ multiprocessor SoCs (MPSoCs), which features an innovative combination of FPGA fabric and six heterogeneous Arm® processor cores (four 64-bit Arm Cortex®-A53 and two 32-bit Arm Cortex-R5 cores). Featuring enhanced processing system and processing logic functionality, the SoMs also offer improved security and robustness and exceptional memory and transceiver performances for use in high-end applications such as 4K video, deep neural AI and machine learning, automotive imaging radar, and high-speed networking. Mouser also stocks the Xilinx ZU19/17/11 Zynq UltraScale+ MPSoC development kit, which includes the Zynq Ultrascale+ MPSoC SoM and an ultra-high-performance carrier card with support for FMC, FMC+, QSFP, HDMI 2.0 input and output, 12G SDI input and output, USB Type-C, Gigabit Ethernet, and more.

iWave’s i.MX 8QuadMax SMARC SoMs, based on NXP i.MX 8QuadMax applications processors, are designed to achieve high-performance multimedia processing in demanding automotive, medical and industrial applications. Providing a complete solution on a highly integrated SMARC R2.0-compatible module, the SoMs incorporate the i.MX 8QuadMax’s four Arm Cortex-A53 cores, two Cortex-M4F cores, and two Cortex-A72 cores, as well as a HiFi 4 DSP core and two graphics processing units (GPUs). The SoM is capable of implementing rich, fully independent graphics content across four HD screens or one 4K screen. The i.MX 8QM/QP SMARC development kit includes the SoM and a SMARC carrier board with all the necessary connectors, displays, and inputs and outputs (I/Os).

iWave’s Intel Arria® 10 SoC SoMs are based on the Intel Arria 10 SX family FPGAs with dual Arm Cortex-A9 cores and up to 660K logic elements. The module includes 32-bit DDR4 memory support for HPS with ECC and 64-bit DDR4 support for FPGA. The Intel Arria 10 SoC SoM and associated development platform enable engineers to create FPGA-based designs in applications such as test and measurement, industrial controls, diagnostic medical imaging, and wireless infrastructure.

iWave, through its extensive portfolio of SoMs, strives to reduce time-to-market and simplify the product development process for organisations and industries across the globe.

To learn more, visit https://eu.mouser.com/manufacturer/iwave-systems/.

Post-Corona Recovery: High demand for “Robotics Skills”

The International Federation of Robotics says that the increased presence of robots in manufacturing is driving demand for skilled workers

Artificial Intelligence, Automation, Covid-19, Industry 4.0

By 2022, an operational stock of almost 4 million industrial robots are expected to work in factories worldwide. These robots will play a vital role in automating production to speed up the post-Corona economy. At the same time, robots are driving demand for skilled workers. Educational systems must effectively adjust to this demand, says the International Federation of Robotics.

“Governments and companies around the globe now need to focus on providing the right skills necessary to work with robots and intelligent automation systems,” says Milton Guerry, President of the International Federation of Robotics. “This is important to take maximum advantage of the opportunities that these technologies offer. The post-Corona recovery will further accelerate the deployment of robotics. Policies and strategies are important to help workforces make the transition to a more automated economy.”

World Economic Forum - Future world of work

“Very few countries are taking the bull by the horns when it comes to adapting education systems for the age of automation,” said Saadia Zahidi, in her role as Head of Education, Gender and Employment Initiatives at the World Economic Forum. “Those that are, have long had a clear focus on human capital development. Countries in northern Europe, as well as Singapore are probably running some of the most useful experiments for the future world of work.”

Economist Intelligence Unit - Automation Readiness Index

According to the “automation readiness index” published by The Economist Intelligence Unit (EIU), only four countries have already established mature education policies to deal with the challenges of an automated economy. South Korea is the category leader, followed by Estonia, Singapore and Germany. Countries like Japan, the US and France are developed and China was ranked as emerging. The EIU summed up the order of the day for governments: more study, multi-stakeholder dialogue and international knowledge sharing.

How to change hiring

On a company level, change hiring is an option as a short-term strategy: “If you can´t find the experienced people, you have to break down your hiring practices to skill sets and not titles,” advised Dr. Byron Clayton, as CEO of Advanced Robotics for Manufacturing (ARM) at the IFR Roundtable in Chicago. “You have to hire more for potential. If you can´t find the person who is experienced then you have to find a person that has potential to learn that job.”

Education of the workforce

Robot suppliers support the education of the workforce with practice-oriented training. “Re-training the existing workforce is only a short-term measure. We must already start way earlier – curricula for schools and undergraduate education need to match the demand of the industry for the workforce of the future. Demand for technical and digital skills is increasing, but equally important are cognitive skills like problem-solving and critical thinking,” says Dr. Susanne Bieller, IFR´s General Secretary. “Economies must embrace automation and build the skills required to profit - otherwise they will be at a competitive disadvantage.”

December 8–11, 2020 automatica - IFR Executive Round Table

The topic “Next Generation Workforce - Upskilling for Robotics" will be discussed by the IFR Executive Round Table on December 9 at the trade fair for smart automation and robotics “automatica” in Munich.

ABB Launches New Analytics and AI Software to Help Producers Optimize Operations in Demanding Market Conditions

The analytics software and services combines operational data with engineering and IT data to deliver actionable intelligence

Artificial Intelligence

The ABB Ability™ Genix Industrial Analytics and AI Suite is a scalable advanced analytics platform with pre-built, easy-to-use applications and services. It collects, contextualizes and converts operational, engineering and information technology data into actionable insights that help industries improve operations, optimize asset management and streamline business processes safely and sustainably.

Analyst studies suggest that industrial companies typically are able to use only 20 percent of the data generated, which severely limits their ability to apply data analytics meaningfully. ABB’s new solution operates as a digital data convergence point where streams of information from diverse sources across the plant and enterprise are put into context through a unified analytics model. Application of artificial intelligence on this data produces meaningful insights for prediction and optimization that improve business performance.

Better use of operational data through the combination with engineering and IT data

“We believe that the place to start a data analytics journey in the process, energy and hybrid industries is by building on the existing digital technology – the automation that controls the production processes,” said Peter Terwiesch, President of ABB Industrial Automation. “We see a huge opportunity for our customers to use their data from operations better, by combining it with engineering and information technology data for multi-dimensional decision making. This new approach will help our customers make literally billions of better decisions.”

ABB Ability™ Genix is composed of a data analytics platform and applications, supplemented by ABB services, that help customers decide which assets, processes and risk profiles can be improved, and assists customers in designing and applying those analytics. Featuring a library of applications, customers can subscribe to a variety of analytics on demand, as business needs dictate, speeding up the traditional process of requesting and scheduling support from suppliers.

Scalable from plant to enterprise, ABB Ability™ Genix supports a variety of deployments including cloud, hybrid and on-premise. ABB Ability™ Genix leverages Microsoft Azure for integrated cloud connectivity and services through ABB’s strategic partnership with Microsoft.

Accelerating business outcomes and protecting existing investments

“The ABB Ability™ Genix Suite brings unique value by unlocking the combined power of diverse data, domain knowledge, technology and AI,” said Rajesh Ramachandran, Chief Digital Officer for ABB Industrial Automation. “ABB Ability™ Genix helps asset-intensive producers with complex processes to make timely and accurate decisions through deep analytics and optimization across the plant and enterprise. “We have designed this modular and flexible suite so that customers at different stages in their digitalization journey can adopt ABB Ability™ Genix to accelerate business outcomes while protecting existing investments.”

A key component of ABB Ability™ Genix is the ABB Ability™ Edgenius Operations Data Manager that connects, collects, and analyzes operational technology data at the point of production. ABB Ability™ Edgenius uses data generated by operational technology such as DCS and devices to produce analytics that improve production processes and asset utilization. ABB Ability™ Edgenius can be deployed on its own, or integrated with ABB Ability™ Genix so that operational data is combined with other data for strategic business analytics.

“There is great value in data generated by automation that controls real-time production,” said Bernhard Eschermann, Chief Technology Officer for ABB Industrial Automation. “With ABB Ability™ Edgenius, we can pull data from these real-time control systems and make it available to predict issues and prescribe actions that help us use assets better and fine-tune production processes.”

Real-time Data: How to Take Advantage of 5G Opportunities?

Oracle set up a webcast highlighting the 5G revolution in the IT ecosystem

Artificial Intelligence, Automation, Industry 4.0

5G is planned to be 100% ready in 2030. Announced as a revolution, this powerful digital transformation is set to change our daily lives substantially.

Its speed is at least 10 times greater than that of 4G. The latency time should tend towards 1ms. These new performance indicators bring new opportunities in terms of application development, data management and analysis, but also risk in terms of cybersecurity.

5G will open the door to an abundant new source of data which requires adapting business strategies. These are the issues developed by the speakers of this webinar developed by Oracle and broadcasted on September 2, 2020.

“5G is cloud-ready” Dany Charbachy, Senior Solution Architect - Telecommunication Technologies, Oracle

Benoit Jouffret, CTO, Thales Digital Identity and Security GBU, reported on the new features around 5G specifications. The International Telecommunication Union (ITU) has identified 3 work axes. The Enhanced Mobile Broadband (eMBB) is 10 to 20 times higher than for 4G, the speed reaching 20Gbit/s instead of 1Gbit/s for 4G. Ultra-Reliable Low Latency Communication (URLLC) brings new performance that is very useful for vehicle-to-vehicle communications where latency plays a key role. Here the latency is being much lower than in 4G: 1ms versus 10ms. Finally, Massive Machine Time Communication (MMTC) brings opportunities for the IoT through a denser connection of objects: 100,000 objects connected per km² with 4G compared to 1 million objects connected per km² with 5G. This density of objects will be of use for connecting robots together with very low latency times.

Dany Charbachy Senior Solution Architect - Telecommunication Technologies, Oracle, highlighted Oracle's solutions for 5G. Oracle's approach is through a core network offering. Either part or all of the core networks are provided “on-premise” to customers; or the service is provided by the cloud. 5G allows software to be installed in the cloud and tends to develop it at a higher scale. This element brings convergence between the IT world and the telecommunications world through the way the cloud allows open connections to third-party applications. Thus, companies will be able to offer services relying on the 5G cloud technology.

Smart technologies will be the norm by 2030

Last, Eric Vessier, Principal Sales Consultant - Analytics & Data Science, Oracle, put light on a use case of 5G through the example of smart mobility. The concept of smart mobility is based on flexibility through the choice of transport modes, travel efficiency (minimum disruption), integration (full door-to-door route), clean technologies (zero emissions objective), and security. It also improves the quality of life by lowering the cost of transport which is more varied and less polluting.

Presented as a guarantee of quality and better collaboration between partners, 5G brings a set of opportunities that CIOs and IT managers will have to integrate without neglecting the security and the way they will handle this massive and multifaceted volume of data.

Mouser's Digital AI Conference is now Available On-Demand

If you didn't have the chance to join the live event, or want to listen again to any of the workshops or presentations, the on-demand version is now available until September 30, 2020

Artificial Intelligence, Automation, Covid-19, Industry 4.0

Mouser Electronics' Digital AI Conference, which took place on September 9th and 10th, is now available on demand. If you didn't have the chance to join the live event, or want to listen again to any of the workshops or presentations, the on-demand version is now available until September 30, 2020.

Day 1: The rise of AI and robotics: How will it change the way we live and work

Speaker: Charlotte Han

While the rise of robotics and AI have a huge potential to lift productivity and economic growth, it’s no secret that many jobs, not limiting to the low-skilled positions, will be automated in the near future. It surely means many of us will need to adapt to the life-long learning culture, constantly upgrading our skills, but is it still a good bet to “find a good job” when the modern-day corporations are hiring fewer full-time employees, favoring the more flexible temporary workforce? In this talk, we will examine how job titles may no longer shape our full identity, what skills we will need to acquire in this new world, and why it’s ever more important to build, contribute to and thrive in communities - of humans.

Day 2: AI and the Future of Home Living: trends and opportunities in the times of a pandemic

Speaker: Johann Romefort

With billions of people quarantined at home, the world discovers that while automation has taken over the workplace, the most mundane tasks at home are still bound to our two hands and some elbow grease. Smart Home promised us intelligent living, but all we got was voice assistants listening to our family lives - what went wrong? Having worked with BSH - the leader in home appliances in Europe - and invested in 20 companies over the last 2 years in the Smart Home category, Johann will explore how AI can indeed simplify our daily lives and what the future holds.

The AI Powered Enterprise: Unlocking the Potential of AI at Scale

Latest Capgemini research highlights that organizations successfully scaling their AI initiatives see biggest benefit in growing revenue, ahead of improving operational efficiency

Artificial Intelligence, Covid-19

A new report from the Capgemini Research Institute examines the pace of enterprise Artificial Intelligence (AI) adoption in the last three years. Over half (53%) of organizations have now moved beyond AI pilots, a marked increase from 36% in Capgemini’s 2017 report on the same subject. Furthermore, 78% of AI-at-scale leaders continue to progress on their AI initiatives at the same pace as before COVID-19, while another 21% have increased the pace of their deployment. This is in stark contrast to the “struggling organizations”: 43% of whom have pulled their investments while another 16% have suspended all AI initiatives due to high business uncertainties related to COVID-19. The report, ‘The AI Powered Enterprise: Unlocking the potential of AI at scale', reveals that the successful implementation of AI at scale delivers tangible benefits on the top line, with 79% of AI-at-scale leaders seeing more than a 25% increase in sales of traditional products and services. In addition, 62% of the AI-at-scale leaders saw at least a 25% decrease in the number of customer complaints, and 71% witnessed at least a 25% reduction in security threats.

Sector view: Life sciences and Retail continue to lead the way in AI adoption; Financial Services and Utilities lag

In terms of the top five sectors leading AI adoption, life sciences and retail organizations are far ahead of others making up 27% and 21% of the AI at-scale leaders respectively; followed by automotive and consumer products with 17% each, and then telecommunications (14%). Only 38% of life sciences organizations have either suspended or pulled investments because of COVID-19, compared to the insurance (66%), banking (64%) and utilities (64%) sectors. This reflects the importance of e-Health in today’s context, where virtual assistants, contact tracing apps and chatbots are proliferating as organizations, like the World Health Organization, launch AI-based tools to gather as well as provide information during the ongoing pandemic.

Trusted, quality data is essential for scaling AI

AI-at-scale leaders rank “improving data quality” as the number one approach that helps them generate more benefits from their AI systems. A strong data governance ensures that the AI teams have the right quality of data and improves the trust placed in data among the executives. Establishing the required technology platforms, such as a hybrid cloud architecture and democratizing the data access, serve as core building blocks for scaling AI.

Hiring dedicated AI leads is key to supporting the AI goals of an organization

Capgemini’s research shows that 70% of organizations find a lack of mid to senior-level talent a major challenge for scaling AI. Over half of AI-at-scale leaders (58%) have appointed an AI Head/Lead/Chief AI officer who can provide development teams with a vision, establish guidelines around prioritization of use cases, ethics and security, while harmonizing the use of platforms and tools for AI development. Organizations also need to focus on a wide range of skillsets for scaling AI applications, beyond pure AI technical skills, to include business analysts and change management specialists. However, there is currently a significant gap between demand and supply in important disciplines like machine learning or data visualization. Training and upskilling are therefore critical to address these gaps and ensure that these skillsets can be kept in-house.

Ethical AI interactions play a vital role in creating consumer satisfaction and trust

Regardless of the strong consumer and regulatory focus on ethical AI, Capgemini found that many organizations are not actively addressing issues like the need to have an empowered ethics team. The report found that less than one-third of struggling organizations (29% compared with 90% of AI-at-scale leaders) agree they have a detailed knowledge of how and why their AI systems produce the output they do. This is important for business executives to be able to trust organizational AI systems. At the same time, it is impossible to establish consumer trust if the customer-facing employees lack trust in the models or data organizations use.

“In light of the recent COVID-19 crisis, while organizations are looking at data and AI to bring resilience to their operations, there is an even stronger need for connections between tactical and strategic business objectives and implementation in order to achieve scale,” says Anne-Laure Thieullent, Artificial Intelligence and Analytics Group Offer Leader at Capgemini. “Our research highlights that the most successful organizations combine efforts to rationalize and modernize their data landscape and data governance processes, focus on bringing new agile tools from partners ecosystems as well as approaches like DataOps4 and MLOps5 (machine learning ops) to develop and deploy AI solutions, nurture teams from diverse backgrounds, and set up balanced operating models.”

The report concludes with recommendations of four principles for organizations to focus on to successfully scale AI:

● Empower: Build strong foundations providing easy access to trusted, quality data through the right data and AI platforms and tools along with agile practices

● Operationalize: Deploy AI through the right operating model, prioritize initiatives and ensure well-balanced governance while embedding ethics

● Nurture: Build diverse talent and collaboration with ecosystems and partners

● Monitor and amplify: Continuously monitor model accuracy and performance to deliver and amplify business outcomes

The Power of Artificial Intelligence in Healthcare

Article by Subh Bhattacharya, Lead, Healthcare, Medical Devices & Sciences at Xilinx

Artificial Intelligence

The use of artificial intelligence (AI) – including machine learning (ML) and deep learning techniques (DL) - is poised to become a transformational force in healthcare. Patients, healthcare service providers, hospitals, medical equipment makers, pharmaceutical companies, professionals, and various stakeholders in the ecosystem all stand to benefit from ML driven tools. From anatomical geometric measurements, to cancer detection, to radiology, surgery, drug discovery and genomics, the possibilities are endless. In these scenarios, ML can lead to increased operational efficiencies, extremely positive outcomes and significant cost reduction.

Regulatory support is also steadily increasing and the US Federal Drug Administration (FDA) is approving more and more ML methods for diagnostic assistance and other applications. The FDA has also created a new regulatory framework for ML based products. This new framework refers to ML techniques as “Software as a Medical Device” (SaMD) and envisions significant benefits to quality and efficiency of care. To support this initiative, the FDA introduced a “predetermined change control plan” in premarket submissions which would include the types of anticipated modifications and the associated methodology to be used to implement those changes in a controlled manner.

The FDA expects commitments from medical device manufacturers on transparency and real-world performance monitoring for SaMD, as well as periodic updates on changes that were implemented as part of the approved pre-specifications and the algorithm change protocol. This framework enables the FDA and the manufacturers to monitor a product from its premarket development to post market performance and allows the regulatory oversight to embrace the iterative improvement power of an SaMD, while assuring patient safety.

Opportunities for ML in healthcare

There’s a broad spectrum of ways that ML can be used to solve critical healthcare problems. For example, digital pathology, radiology, dermatology, vascular diagnostics and ophthalmology all use standard image processing techniques.

Chest x-rays are the most common radiological procedure with over 2 billion scans performed worldwide every year, that’s 548,000 scans a day. Such a huge quantity of scans imposes a heavy load on radiologists and taxes the efficiency of the workflow. Often ML, Deep Neural Network (DNN) and Convolutional Neural Networks (CNN) methods outperform radiologists in speed and accuracy, but the expertise of a radiologist is still of paramount importance. However, under stressful conditions during a fast decision-making process, human error rate could be as high as 30%. Aiding the decision-making process with ML methods can improve the quality of result, providing the radiologists and other specialists an additional tool.

Validation of ML are today coming from multiple and very reliable sources. In one study (Reference: Stanford ML Group https://stanfordmlgroup.github.io/projects/chexnet/), a 121-layer CNN was trained to detect pneumonia better than four radiologists. Similarly, in multiple other studies by the National Institute of Health and other organizations, trials around early detection of cancerous pulmonary nodules for lung cancer detection using a DNN model achieved better accuracy than multiple radiologists’ diagnosis.

Though adoption in digital pathology is slower, multiple algorithm-based detections applied in a study of breast cancer compared well, and sometimes fared better than the prognosis from several pathologists. Similarly, RNN/LSTM based approaches on genome annotation predicted better results on whether single nucleotide variants are potentially pathogenic.

Many procedures within radiology, pathology, dermatology, vascular diagnostic and ophthalmology could be on large image sizes, sometimes 5 Megapixels or larger, requiring complex image processing. Also, the ML workflow can be computing and memory intensive. The predominant computation is linear algebra and demands many computations and a multitude of parameters.

This results in billions of multiply-accumulate (MAC) operations, hundreds of Megabytes of parameter data and requires a multitude of operators and a highly-distributed memory subsystem. So, performing accurate image inferences efficiently for tissue detection or classification using traditional computational methods on PCs and GPUs are inefficient, and healthcare companies are looking for alternate techniques to address this problem.

Improved efficiency with ACAP devices

Xilinx technology offers a heterogenous and a highly distributed architecture to solve this problem for healthcare companies. Xilinx Versal™ Adaptive Compute Acceleration Platform (ACAP) family of System-on-Chips (SoCs) with its adaptable Field Programmable Gate Arrays (FPGAs), integrated digital signal processors (DSPs), integrated accelerators for deep learning, SIMD VLIW engines with a highly distributed local memory architecture and multi-processor systems are known for their ability to perform massively parallel signal processing of high-speed data in close to real-time.

Additionally, Versal ACAP has multi-terabit-per-second Network on Chip (NoC) interconnect capability and an advanced AI Engine containing hundreds of tightly integrated VLIW SIMD processors. This means computing capacity can be moved beyond 100 Tera operations per second (TOPS).

These device capabilities dramatically improve the efficiency of how complex healthcare ML algorithms are solved and help to significantly accelerate healthcare applications at the edge, all with less resources, cost and power. With Versal ACAP devices, support for recurrent networks could be inherent due to the simple nature of the architecture and its supporting libraries.

Xilinx has an innovative ecosystem for algorithm and application developers. Unified software platforms, such as Vitis™ for application development and Vitis AI™ for optimising and deploying accelerated ML inference, mean developers can use advanced devices – such as ACAPs - in their projects.

Healthcare and medical device workflows are undergoing major changes. In the future, medical workflows will be ‘Big Data’ enterprises with significantly higher requirements for computational needs, data privacy, security, patient safety and accuracy. Distributed, non-linear, parallel and heterogeneous computing platforms are key for solving and managing this complexity. Xilinx devices like Versal and the Vitis software platform are ideal for delivering the optimized AI architectures of the future.

Subh Bhattacharya

NXP Semiconductors Announced a New Phase of Connectivity Innovation

NXP releases its new family of 2x2 Wi-Fi 6 6 (802.11ax) Dual Band + Bluetooth/BLE solutions, allowing new levels of connectivity for advanced gaming, audio, industrial and IoT market, powering first WiFI 6 enabled gaming consoles

Industry 4.0

On October 8, NXP Semiconductors announced its new family of 2x2 Wi-Fi 6 6 (802.11ax) Dual Band + Bluetooth/BLE solutions that are aiming to drive a new phase of connectivity innovation for advanced gaming, audio, industrial and IoT markets. Enabling the world’s first Wi-Fi 6 enabled gaming console, NXP’s optimized IW62X family of products aims to provide increased capacity, efficiency and performance for next-generation connectivity solutions including smart consumer IoT hubs, wireless speakers, video-enabled smart devices, augmented and virtual reality (AR/VR) devices and a universe of additional IoT applications.

Advanced wireless capabilities

The new 2x2 Wi-Fi 6 solutions are fitted for advanced gaming consoles that demand high-performance Wi-Fi with near zero wireless controller lag time and low-latency multiplayer gaming experiences (via a single, in-room console) over wireless networks. The IW62X solutions also provide real-time interactions for cloud-based gaming devices, clients, and services by providing high-bandwidth and low-latency connectivity needed for full 4k / 60 fps gameplay.

Rafael Sotomayor, Executive Vice President of Connectivity and Security at NXP commented: “Today we’re delivering the industry’s leading 2x2 Wi-Fi 6 Dual Band + Bluetooth solutions, enabling both the gaming industry and one of its most prominent console makers with Wi-Fi 6 for the first time. This product announcement reinforces NXP’s position at the cutting edge of connectivity and our ability to enable advanced wireless capabilities for the future of gaming plus the exponential growth in Wi-Fi 6 access points and IoT devices tethered to a single gateway. Our IW62X family of integrated Wi-Fi 6 + Bluetooth solutions brings a step-change in high performance and power efficient solutions for the latest-generation of smart home IoT services, connected industrial applications and the vast number of consumer devices that rely on power-efficient Wi-Fi and Bluetooth connectivity to maximize the user experience.”

A milestone to the future of consumer and industrial IoT

NXP’s family of 2x2 Wi-Fi 6 Dual Band + Bluetooth solutions brings a substantial boost in speed, and a 4x increase in network capacity and a 2x increase in bandwidth over previous generations of Wi-Fi. This increased capacity with reduced latency supports a significantly higher numbers of users and devices connected to a network or gateway.

These features support a broad choice of robust use cases, comprising AR/VR headsets, making full, completely wireless immersive experiences in HD possible; Multi-channel home theatre audio experiences with wireless speakers, video-enabled smart assistants with full bandwidth to support seamless 4k video interaction and content streaming; smart IoT hubs, appliances and accessories, outdoor and remote IP-enabled security cameras; and consumer, commercial, and industrial IoT solutions for home and building control (smart lighting, building safety and security and climate control).

Expanded capabilities with NXP’s Wi-Fi 6 + BT/BLE and Wi-Fi 6 + BT/BLE-Audio

The BT/BLE radios support Bluetooth/BLE standards (BLE LR/2Mbps, BLE AoA/AoD, BLE Mesh), independent Bluetooth connections and operational modes with multiple external devices. These capabilities enable advanced smart hub and smart home services that use Bluetooth-based positioning and Mesh connectivity, providing support for numerous applications, including dual/independent wireless headset support, robust support of audio streaming (via stereo A2DP, Isochronous Channel); and separate low-power peripheral/accessory control, including speaker, voice assistant, audio hubs/soundbar, physical remote control and low-power device wakeup.

The IW62X family features the highest level of integration in the market, including 2.4 and 5 GHz dual band Power Amplifiers (PAs), Low Noise Amplifiers (LNAs) antenna switches. It also includes a power management unit that significantly reduces system-level BOM (Bill of Materials) and device board area, while as well simplifying chip-on-board and integrated-module designs.

AI Platform based on Nvidia Quadro GPUs

Designed for real time industrial video analysis

Artificial Intelligence

Acceed included a range of high-performance, robust and fanless industrial controllers through the new series AVA-5500 AI platform. Equipped with 6th and 7th generation Intel i7 processors and the Nvidia Quadro graphics processor P5000 MXM 3.1, the AVA-5520 model provides a reliable, high-performance basis for industrial video and graphics analysis applications. Eight M12-GbE ports, four of which include PoE, serve for the connection of high-resolution cameras on the front side. In addition, four serial DB-9 sockets, four USB 3.0 ports and two 1000 base T RJ45 Ethernet interfaces are available, also readily accessible on the front side of the compact full-metal casing at any time.

Different AVA-5500 series models are already used in the field of railway construction, automatic inspection of tracks with measurement trains at high travel speed, and for video monitoring station concourses and station platforms. In their interplay with corresponding software and sophisticated analysis algorithms, these systems are able to generate warnings and alarms automatically in order to provide early information pertaining to potential dangers.

The AVA-5520 is developed for industrial application under demanding conditions within a maximum temperature range from -25°C to +70 °C

Local graphics output is made available in high resolution via two CPU display ports, four GPU display ports and a DVI connector. The working memory can be configured up to 32 GB, and two internal SATA slots for 2.5” hard drives are available for local storage media. An externally accessible CFast base and an M.2 base (2280) are set up as further storage options. Radio modules for WLAN and mobile communication (LTE, UMTS, GSM) can be integrated via the two internal mini PCIe slots, each with a respective USIM slot. Respectively four insulated digital inputs and outputs as well as connections for audio communication round-off the equipment.

The connection for the 12 VDC power supply and for M12 industrial plugs is located on the reverse side. Six LEDs on the front side, of which three can be configured customer-specifically, allow fast function control and diagnosis. Using Windows 10 and Ubuntu 16.04 as operating systems, its power consumption at 100% load of the CPU and graphics card P5000 is of 177 W.

Complete Specialist AI Package with Interfaces and Sensor Technology

Symate and Pepperl+Fuchs partner to develop a new solution including specialist functions for automate process analysis and optimization

Artificial Intelligence

Symate, a specialist in optimizing manufacturing processes using artificial intelligence (AI) techniques, has combined its expertise with Pepperl+Fuchs. They aim to create an integrated specialist solution for the automated analysis and optimization of industrial manufacturing processes and quality characteristics. This will provide users a broad choice of functions and perfectly coordinated hardware and software components. Moreover, it symbolizes a strategic milestone for Symate which is closer to achieving its goal of creating a central AI platform for industrial production.

As part of a pilot project, Symate is currently testing Pepperl+Fuchs hardware components and connecting them to the Detact® AI system. This represents the basis for a perfectly coordinated package that offers both companies' customers a significantly wider range of functions for automating manufacturing processes.

A win-win solution for everyone involved

Pepperl+Fuchs can receive valuable feedback from ongoing processes and clearly visualize the data from its own sensors, while Symate is significantly closer to its strategic goal of developing Detact® into a central, holistic AI platform for the processing industry.

David Haferkorn, the Product Manager responsible for this project at Symate GmbH, explained: "Our AI system detects and analyzes many different parameters. To process this data quickly and reliably at any time, we require highly reliable hardware components that provide very detailed information. Although we can integrate almost any source of data into Detact®, the quality of the data provided is not always suitable for comprehensive, automated AI analysis. Therefore, we must often make adjustments or install additional sensors. In Pepperl+Fuchs we have found an automation technology expert that can really support us in selecting the right hardware. Now, from day one, we can offer our customers reliable hardware components that can be perfectly coordinated with our software and integrate specialist functions based on the data set available from Pepperl+Fuchs."

Daniel Möst, New Business Development at Pepperl+Fuchs, added: "With Symate, we would like to combine our expertise in sensor technology with the artificial intelligence of Detact®. From our perspective, this is a perfect match for future-oriented business models. At Pepperl+Fuchs, we are already experiencing completely new scenarios when we link the data from our sensors with Detact® and visualize it clearly. Currently, various industrial sensors with IO-Link interfaces, RFID components, IO-Link masters, and the associated connection technology are being used in the test phase at Symate. Once we use the sensor technology in a "real" plant, we will be able to gain further practical experience and develop a perfect solution for industrial applications. We are very excited about that."

Protect Machines Using Sound, IoT and AI

All-in-one solution for predictive maintenance

Artificial Intelligence, Electronics & Electricity

Technicians can often tell when the machine does not sound right. What if they could listen to machines safely from distance, or even from their home? And what if they had a powerful AI trained to reliably alert them about sound anomalies that usually precede failures? With Neuron soundware, it is not 'if' any more.

Neuron's intelligent recording & edge computing IoT device monitors machines continuously and AI based software automatically checks the collected audio data of machines against the extensive database of warning sounds. As a result, you get notified about discovered abnormal behaviour and possible future malfunctions.

Insights into machine health will give you predictive capabilities and help you build another layer of protection. Improve your asset immunity and recover easier and faster in the future. Optimized maintenance cycles will help you save money. Prepare your assets for less face-to-face checks and for longer lifetimes.

Factory Intelligence System

A combination between process analytics and tools to manage production

Artificial Intelligence

To enhance the journey to smart manufacturing and improve efficiency, MTEK released MBrain, a Factory Intelligence System. Mattias Andersson, MTEK’s Founder and CEO commented: “We firmly believe that accelerating the journey to the smart factory will make manufacturers more competitive, more efficient and improve factory and financial performance, regardless of their geography. We are confident we can assist manufacturers in their digitalization journey by liberating the value of the data found in manufacturing processes. MBrain is a Factory Intelligence System that takes a systemized approach to extracting that value, driving real performance improvements.”

Designed to be intuitive for the user and to complement the existing software landscape

MBrain connects to production machines and processes throughout the product life cycle, from design to end of life, linking operations to performance through cross process analytics. MBrain is scalable across the entire enterprise. It also simplifies continuous improvement with a real-time quality toolbox as well as streamline manufacturing planning, control and traceability. MTEK CTO Tord Johnson added: “The machines and processes in any manufacturing ecosystem contain huge amounts of data. Today, this data is too often located in siloed applications. There has been no intuitive way to use it to accurately correlate cause and effect, and hence provide the feedback necessary to improve performance. MBrain connects to any machine in the factory, correlating machine data with production output, to improve performance and quality by using drag-and-drop advanced analytics tools like real-time SPC and Artificial Intelligence.”

MTEK will host a launch webinar on September 29 at 10 am CET where MBrain will be presented in more detail. The Webinar will be available on demand for registered users.

NVIDIA GPU Cloud Ready Modular Embedded PC

Designed for AI applications at the Edge, image processing and transport/traffic

Artificial Intelligence

ICP Deutschland provides the modular embedded system MX1-10FEP-D which has undergone functional tests by NVIDIA® and has thus been classified as "NVIDIA GPU Cloud Ready" (NGC). NGC is the core for process-optimized software such as deep learning, machine learning and high-performance computing.

Through its modular concept, the MX1-10FEP-D, can be flexibly adapted to meet individual requirements

The advanced mechanical design enables the installation of NVDIA® graphics cards such as NVIDIA® Tesla® P4 or NVIDIA® T4. The embedded PC supports the 8th and 9th generation XEON® and Core ITM processors from Intel® and offers sufficient CPU processing power. To be able to exploit its extremes, ICP offers the MX1-10FEP-D with matching industrial main memories and storage media as a ready-to-use system.

Traditional Image Processing vs. Deep Learning

Decoding the dichotomy

Vision & Identification, Artificial Intelligence

HCL. Deep learning has certainly revolutionized traditional image processing. It has pushed the boundaries of Artificial Intelligence to unlock potential opportunities across industry verticals. Several challenges that once seemed impossible to solve, are now solved to a point where machines are performing better than humans. However, that does not mean that the traditional image processing techniques that have advanced in the years before the rise of DL have been made obsolete. This paper will analyze the benefits and drawbacks of each approach. It aims to provide better clarity on the subject which can help data scientists/ industries choose the most suitable method depending on the task at hand. In this paper, the word “traditional image processing” shall be used to refer to a broader area of image processing which encompasses domains of image processing, computer vision, and classical machine learning.

Augmented and Virtual Reality: The Reality Spectrum

Visualizing potential across hardware, software, and services

Industry 4.0, Vision & Identification, Artificial Intelligence

ABI. Over the past decade, the prevalence of new enabling technologies in numerous marketplaces has grown significantly. Any market examined will point to new applications and use cases being enabled through novel technology adaptations and expanding product portfolios. This has consisted of significant digitization in the enterprise sector: basic connectivity added to an implementation enabling new things. This gave rise to analytics, automation, Artificial Intelligence (AI), and more. Immersive technologies in Augmented Reality (AR) and Virtual Reality (VR) have presented and, to some extent, already proven to be more than iterative improvements to existing tech in both consumer and enterprise domains. A level of immersion not possible before was introduced with VR, while AR offered an entirely unique way of accessing and overlaying data without removing a user from the world.

TIMGlobal Media BV

177 Chaussée de La Hulpe, Bte 20, 1170 Brussels, Belgium

o.erenberk@tim-europe.com - www.ien.eu

- Editorial Director:Orhan Erenberko.erenberk@tim-europe.com

- Editor:Kay Petermannk.Petermann@tim-europe.com

- Editorial Support / Energy Efficiency:Flavio Steinbachf.steinbach@tim-europe.com

- Associate Publisher:Marco Marangonim.marangoni@tim-europe.com

- Production & Order Administration:Francesca Lorinif.lorini@tim-europe.com

- Website & Newsletter:Marco Prinarim.prinari@tim-europe.com

- Marketing Manager:Marco Prinarim.prinari@tim-europe.com

- President:Orhan Erenberko.erenberk@tim-europe.com

Advertising Sales

Tel: +41 41 850 44 24

Tel: +32-(0)11-224397

Fax: +32-(0)11-224397

Tel: +32 (0) 2 318 67 37

Tel: +49-(0)9771-1779007

Tel: +39-02-7030 0088

Turkey

Tel: +90 (0) 212 366 02 76

Tel: +44 (0)7594 239 182

John Murphy

Tel: +1 616 682 4790

Fax: +1 616 682 4791

Incom Co. Ltd

Tel: +81-(0)3-3260-7871

Fax: +81-(0)3-3260-7833

Tel: +39(0)2-7030631

- IEN WebMag May 2026 IEN Europe presents Industry News, Products and Solutions for industrial decision makers in the pan-European B2B market.

- IEN WebMag May 2026 IEN Europe presents Industry News, Products and Solutions for industrial decision makers in the pan-European B2B market.

- IEN Europe WebMag February 2026 IEN Europe presents Industry News, Products and Solutions for industrial decision makers in the pan-European B2B market.

- IEN Europe WebMag January 2026 IEN Europe presents Industry News, Products and Solutions for industrial decision makers in the pan-European B2B market.

- WebMag IEN Europe December 2025 IEN Europe presents Industry News, Products and Solutions for industrial decision makers in the pan-European B2B market.

- WebMag August 2025 IEN Europe presents Industry News, Products and Solutions for industrial decision makers in the pan-European B2B market.

- WebMag July 2025 IEN Europe presents Industry News, Products and Solutions for industrial decision makers in the pan-European B2B market.

- WebMag May 2025 IEN Europe presents Industry News, Products and Solutions for industrial decision makers in the pan-European B2B market.

- WebMag February 2025 IEN Europe presents Industry News, Products and Solutions for industrial decision makers in the pan-European B2B market.

- WebMag January 2025 IEN Europe presents Industry News, Products and Solutions for industrial decision makers in the pan-European B2B market.

- WebMag December 2024 IEN Europe presents Industry News, Products and Solutions for industrial decision makers in the pan-European B2B market.

- IEN Europe SPS WebMag 2024 IEN Europe special issue for sps 2024 in Nuremberg, November 12 to 14.

- IEN Europe WebMag August 2024 IEN Europe presents Industry News, Products and Solutions for industrial decision makers in the pan-European B2B market.

- WebMag IEN Europe July 2024 IEN Europe presents Industry News, Products and Solutions for industrial decision makers in the pan-European B2B market.

- IEN Europe May 2024 IEN Europe presents Industry News, Products and Solutions for industrial decision makers in the pan-European B2B market.

- WebMag HANNOVER MESSE 2024 Special issue for Hannover Messe 2024, April 21 - 26

- WebMag January/February 2024 IEN Europe presents Industry News, Products and solutions for industrial decision makers in the pan-European B2B market.

- WebMag December 2023 Highlights Products and Solutions sps and from 2023

- IEN Europe November Webmag Special SPS As the industrial world gears up for the 2023 SPS Show in Nuremberg, attendees and exhibitors alike are eagerly awaiting the opportunity to delve into the latest trends and innovations that promise to reshape the future of industrial automation and techno

- IEN Europe April WebMag Throw an eye to this web magazine dedicated to the coming Hannover Messe 2023! We wish to see you there as well. Have a nice and interesting reading

- IEN Europe Jan/Feb 2023 Webmag Dear readers, Happy New Year to you! IEN Europe is coming back this new year with a special focus on robotics and digital automation.

- #6 AI IEN - December 2020 Discover our latest edition of our digital magazine: AI IEN!

- IEN Europe December Last issue of 2020, IEN Europe December highlights the key trends in the IoT & e-mobility, Automotive, and Identification & Vision Systems fields.

- IEN Europe November - SPS Webmag Discover in this digital issue the best technology showcased at the upcoming SPS virtual exhibition!

- #5 AI IEN - October 2020 We are delighted to release the fifth issue of AI IEN! With a specific focus on Artificial Intelligence (AI) applied to the industrial field, AI IEN aims to become a point of reference for all AI professionals.

- Covid-19 Special e-magazine Don't miss our new issue on Covid-19 to discover the most innovative Industrial answers to the pandemic.

- #4 AI IEN - June 2020 Don’t miss the fourth issue of AI IEN! With a specific focus on Artificial Intelligence (AI) applied to the industrial field, AI IEN aims to become a point of reference for all AI professionals.

- #3 AI IEN - April 2020 Don’t miss the third issue of AI IEN! With a specific focus on Artificial Intelligence (AI) applied to the industrial field, AI IEN aims to become a point of reference for all AI professionals.

- #2 AI IEN - February 2020 Don’t miss this new issue of AI IEN! With a specific focus on Artificial Intelligence (AI) applied to the industrial field, AI IEN aims to become a point of reference for all AI professionals.

- SPS Webmag November Discover in this digital issue the best technology showcased at the upcoming SPS - Smart Production Solutions 2019!

- #1 AI IEN - September 2019 We are delighted to announce the launch of our new digital magazine AI IEN. With a specific focus on Artificial Intelligence (AI) applied to the industrial field, AI IEN aims to become a point of reference for all AI professionals.