The global pandemic has radically impacted the supply chain and logistics industry, making the need for robotic automation more urgent than ever. With more than 70% of labor in warehousing being dedicated to picking and packing, numerous companies are gradually investing in logistics automation. But what happens when the robots must handle an unlimited number of (unknown) stock keeping units? These companies need a fast, reliable, and robust way to automate picking and placing of a large variety of objects.This challenge was taken up successfully by the Dutch company Fizyr. The computer vision company based in Delft focuses on enabling robots to pick unknown objects even in harsh logistics environments. The result is an automated vision solution that enables logistic automation in various conditions and applications, like item picking, parcel handling, depalletising, truck unloading or baggage handling. To complete the system with the optimal hardware, Fizyr integrates compact, robust Ensenso 3D cameras in combination with high performance GigE uEye cameras from IDS. Fizyr has created a plug-and-play modular software product that integrates smoothly with any system, giving integrators the freedom to choose the best hardware (e.g. Cobots or Industrial robots) for their picking cell. In essence, the camera sees products to be picked or classified. Depending on the individual customer application, up to four Ensenso 3D cameras in combination with powerful GigE uEye CMOS cameras are used.

Ensenso 3D camera allows robots individual handling of unknown objects

The integrated IDS industrial cameras ensure a reliable, precise image capture that is needed for Fizyr's software algorithms which provide over 100 grasp poses each second, including the classification to handle objects differently. The software also performs quality controls and detects defects to prevent damaged items from being placed on a sorter – with the help of IDS cameras as sharp eyes of the automatic system.

Thus these algorithms are able to provide all relevant information about segmentation as well as about classification of type of parcel (including box, bag, envelope/flat, tube, cylinder, deformable, etc.). The system recognizes outliers or non-conveyables (i.e. damaged goods), best possible grasp poses in 6 DoF (degrees of freedom) and multiple ordered poses per object. It allows sensors or robots to deal with closely stacked or overlapping objects, highly reflective items and apparel in polybags, white-on-white and black-on-black flats, as well as transparent objects.

Since the Stereo Vision quality directly depends on the scene’s light condition and object surface textures, finding and calculating coordinates of corresponding points on less textured or reflecting surfaces is very difficult. To meet these high requirements, Fizyrs first choice for customer solutions are camera models out of the IDS portfolio. Thereby the tasks are well divided: The uEye GigE CP camera is taking 2D images of objects and provides them as input for Fizyr's algorithms, which then proceed to classify the objects under the camera. The objects can be unknown and varying in shape, size, color, material, and stacking. Then the Ensenso camera creates the point cloud maps, Fizyr's software combines it with the information from the 2D image and analyses the surface of the cloud for suitable grasp poses for the gripper (or multiple grippers) and proposes the best ones. A clear representation of surfaces for different materials is critical, as it is a key component of its algorithms. The Ensenso 3D cameras from IDS mprove the classic Stereo Vision principle by additional techniques to achieve a higher quality depth information and more precise measurement results.

The system robustly segments unknown parcels, even under harsh lighting conditions.

The specific camera models, as well as the number of cameras per system, depend on the individual use case of the customer. For a typical bin-picking solution with a cobot, one Ensenso N35 is used in combination with a GigE uEye CP, but there are clients that use one Ensenso X36 and a GigE uEye CP for bin picking together with four Ensenso N35 cameras for stowing the item in other bins.

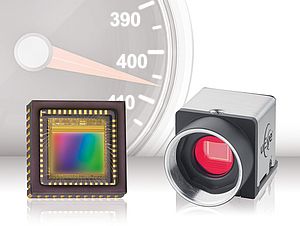

The recommended uEye CP stands for "Compact Power" and is the tiny powerhouse for industrial applications of all kinds. It offers maximum functionality with extensive pixel pre-processing and, thanks to the internal 120 MB image memory, is also perfectly suited for multi-camera systems. The camera delivers data at full GigE speed and enables single-cable operation up to 100 metres via PoE ("Power over Ethernet").

The selected UI-5240CP-C-HQ Rev. 2 is a particularly powerful industrial camera with the e2v 1.3 megapixel CMOS sensor. This is one of the most sensitive sensors in the IDS product portfolio and apart from the here used color version it is available in monochrome and as NIR version. Besides its outstanding sensitivity to light, the camera also has a range of other distinctive features which makes the sensor extremely flexible if requirements or ambient conditions change.

The compact and robust aluminum housing of the Ensenso N35 3D camera with lockable GPIO connector for trigger and flash and GigE connector, has two monochrome CMOS sensors (Global Shutter, 1280 x 1024 pixels) and a projector. Via Power over Ethernet a data transfer and power supply are possible with long cable lengths. Due to the integrated FlexView technology the N35 models are particularly suitable for 3D acquisition of still objects and for working distances up to 3,000 mm.

The Ensenso X36 3D camera system consists of a projector unit, two GigE uEye cameras either with 1.3 MP or 5 MP sensors (CMOS, monochrome), mounting brackets and adjustment angles, three lenses as well as sync. and patch cables to connect the camera with the projector unit. The FlexView technology ensures a better spatial resolution as well as a very high robustness of the system for dark or reflecting surfaces.

"Fizyr normally uses one camera per system, usually uEye cameras in combination with Ensenso N35 and X36, but there are no limitations. The most common use case for Fizyr so far is one uEye and one Ensenso per system," underlines Herbert ten Have, CEO at Fizyr.

The different Ensenso models that can be used have one thing in common: the dispose of a light-intensive projector produces a high-contrast texture on the object surface by using a pattern mask, even under difficult light conditions. The projected texture supplements the weak or non-existent object surface structure. Therefore this principle is also called “Projected Texture Stereo Vision”. The result is a more detailed disparity map and a more complete and homogeneous depth information of the scene.

Data acquisition

Their extensive features qualify both Ensenso 3D camera models for the wide range of demanding applications in the supply chain and logistics industry, such as 3D object recognition, classification and localization (e.g. quality assurance), logistics automation (e.g. (de-)palletizing), robot applications (e.g. bin picking), capture of objects up to 8 m³, (e.g. pallets) or automatic storage systems.

"Fizyr has integrated the Ensenso SDK in its software using a modern and fast wrapper," Herbert ten Have explains. "A great advantage is the 2D/3D combination, which allows the image from the 2D camera to be placed over the 3D point cloud as an overlay". This provides a more vivid impression of the scene. On the other hand, the camera image can be optimally adjusted to the robot coordinate system by means of "hand-eye calibration" in order to ensure a target-oriented gripping.